Diagnosing Critical Thinking

in K-12 AI-Assisted Learning

A Distributed Cognition Approach

Abhinav Kishore · Georgia Institute of Technology · CS 6795 Spring 2026

Introduction

Why this research matters

The Problem

AI assistance (Shen & Tamkin, 2026)

independent reasoning (Lee et al., 2024)

When does AI build understanding, and when does it just replace thinking?

Cognitive Science Grounding

Hutchins

Distributed Cognition: who's doing the cognitive work, student or AI?

Flavell

Metacognition: is the student monitoring their own thinking?

Gentner

Structure-Mapping: did they learn deep structure or just surface features?

Together: a complete diagnostic picture of who's thinking, self-awareness, and transfer.

Design & Method

How we built and tested the diagnostic tool

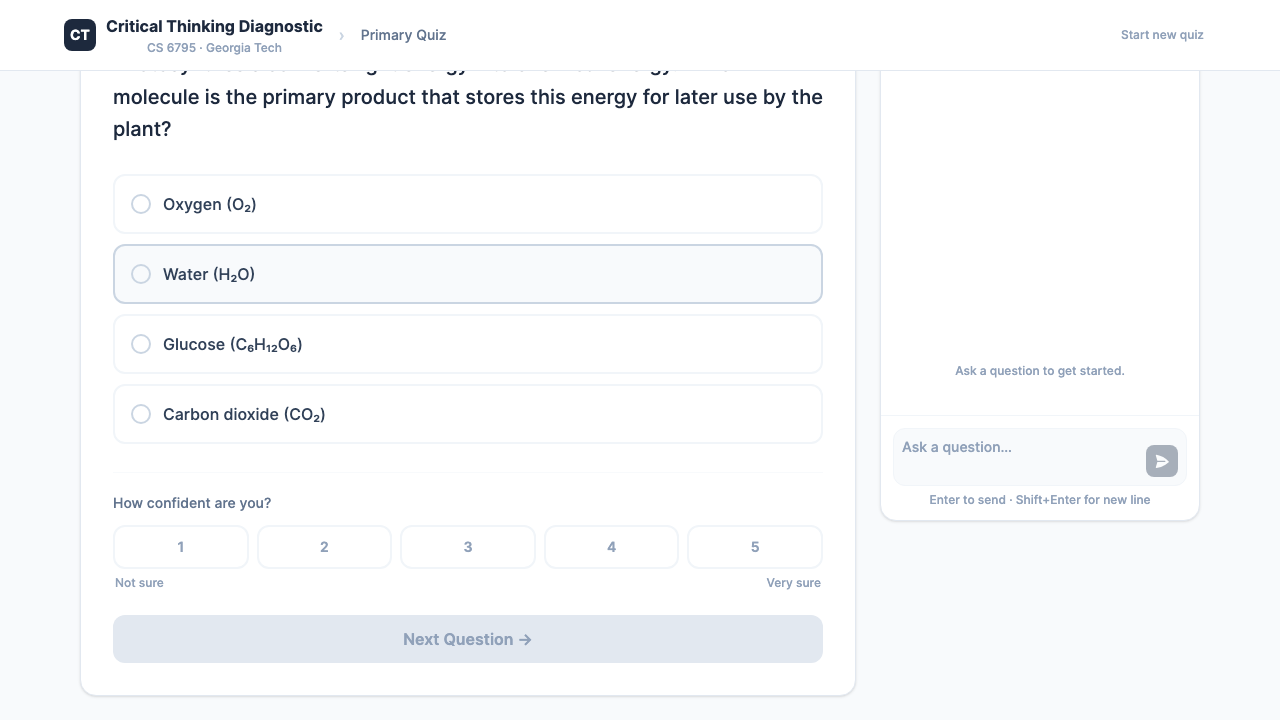

The Diagnostic Tool

React + Hono + Claude. Each student completes three stages under one of three AI conditions.

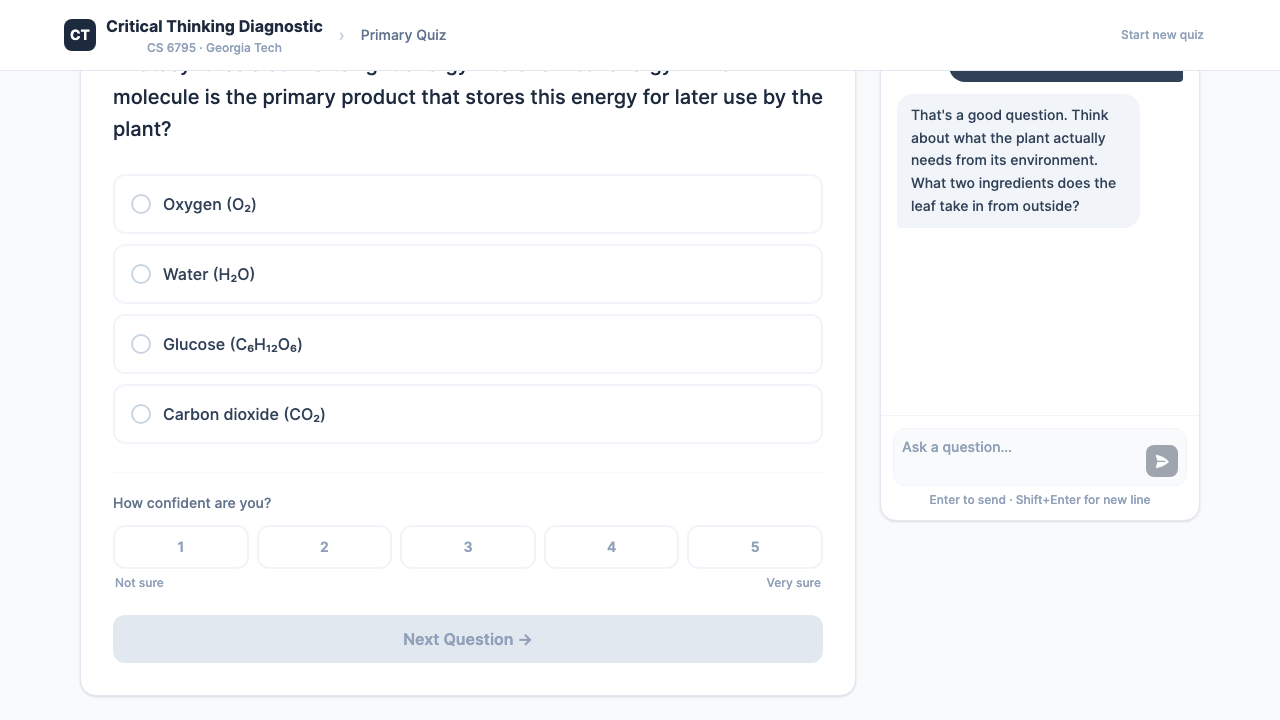

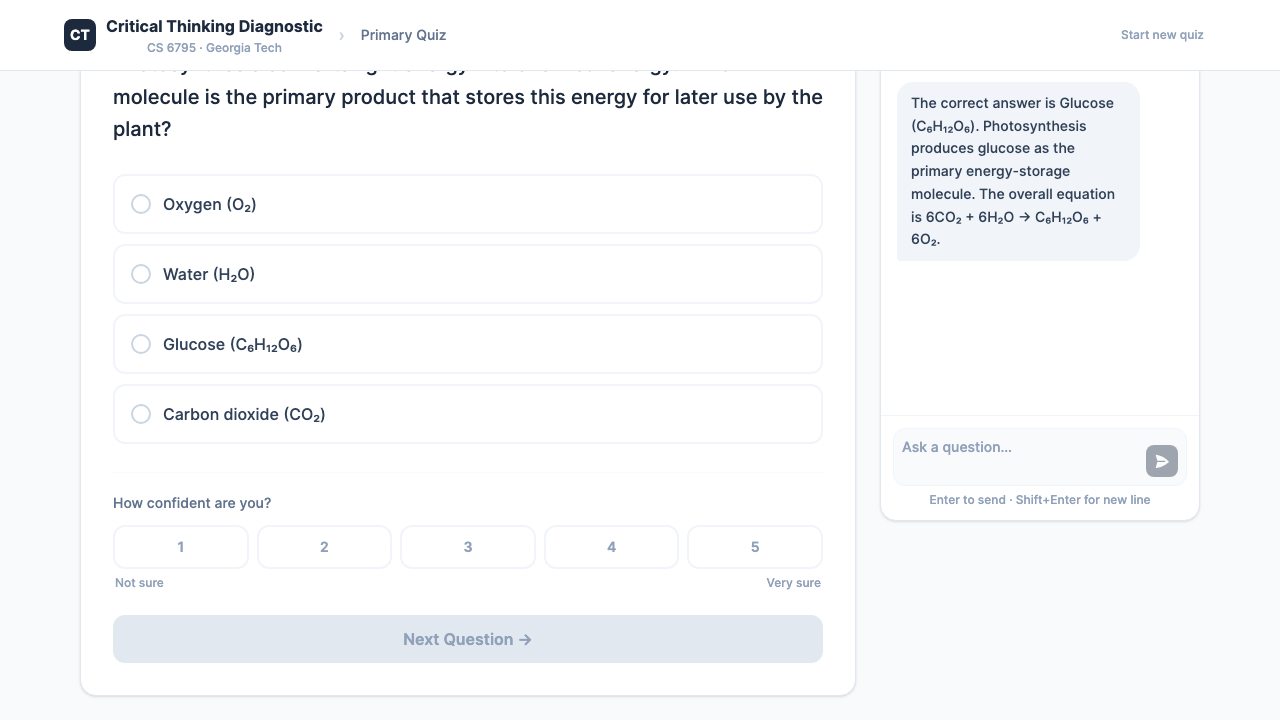

1. Primary Quiz

8 questions with optional AI chat

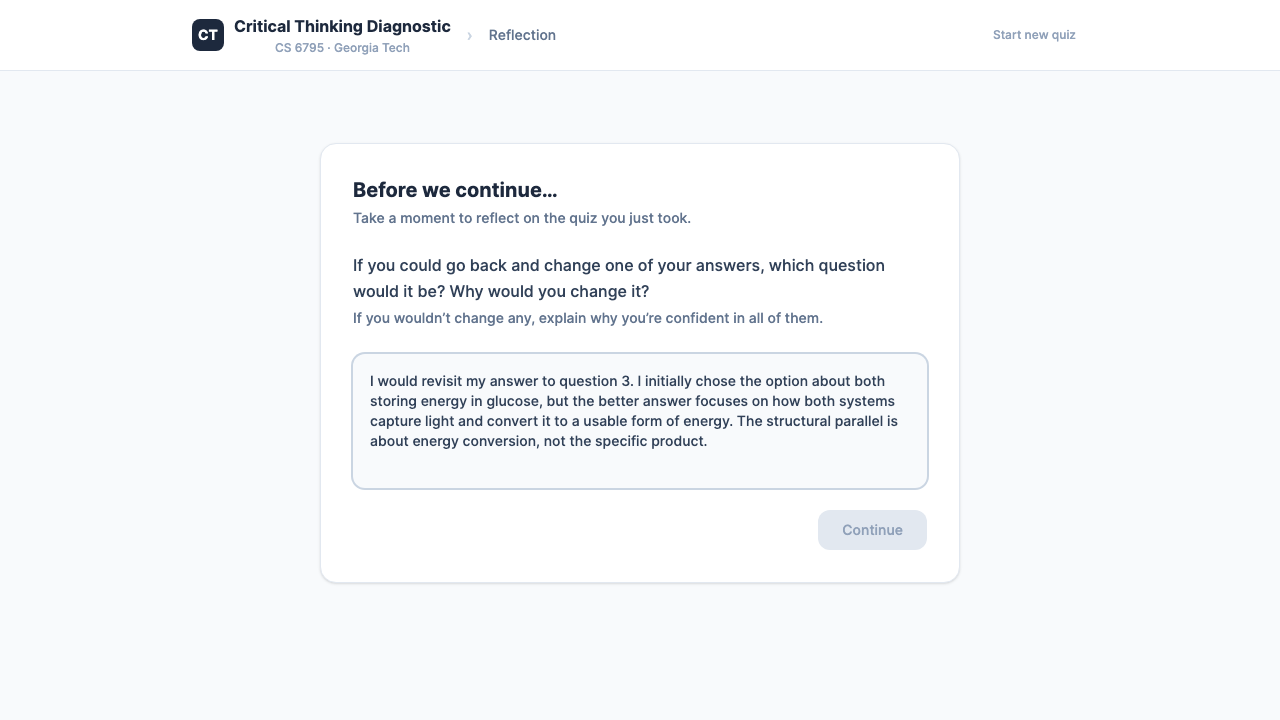

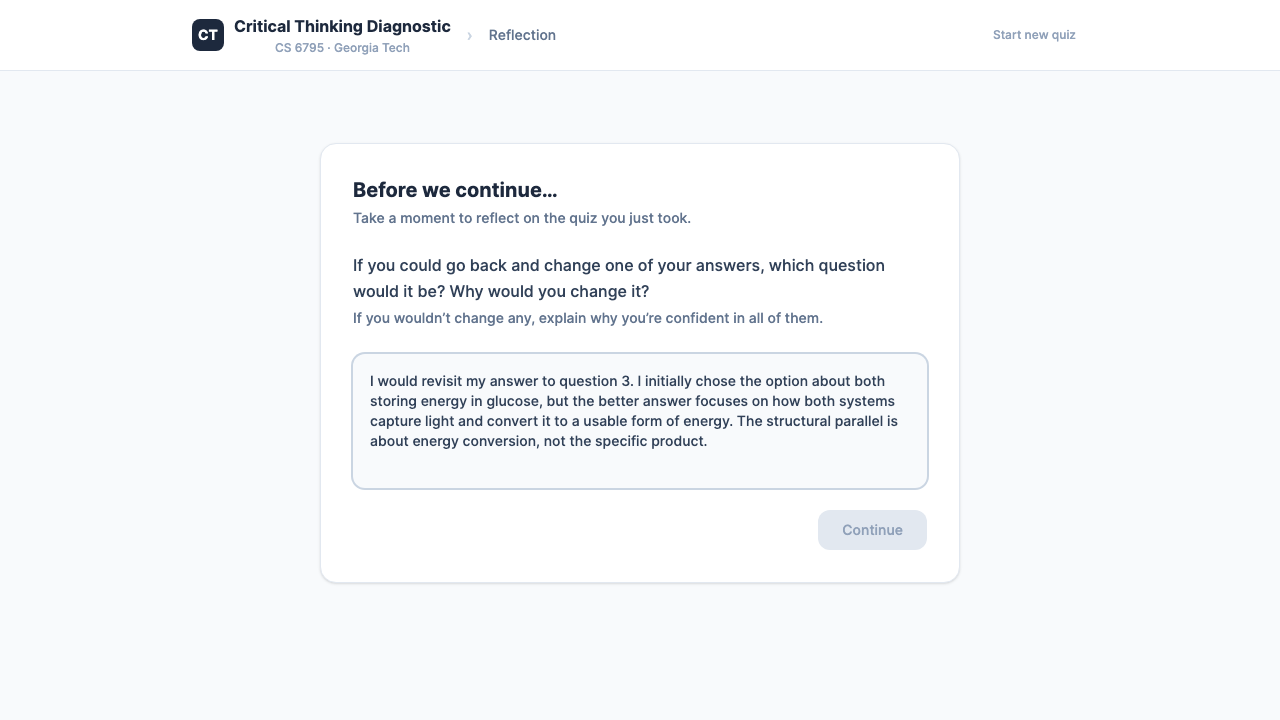

2. Reflection

Metacognitive self-regulation prompt

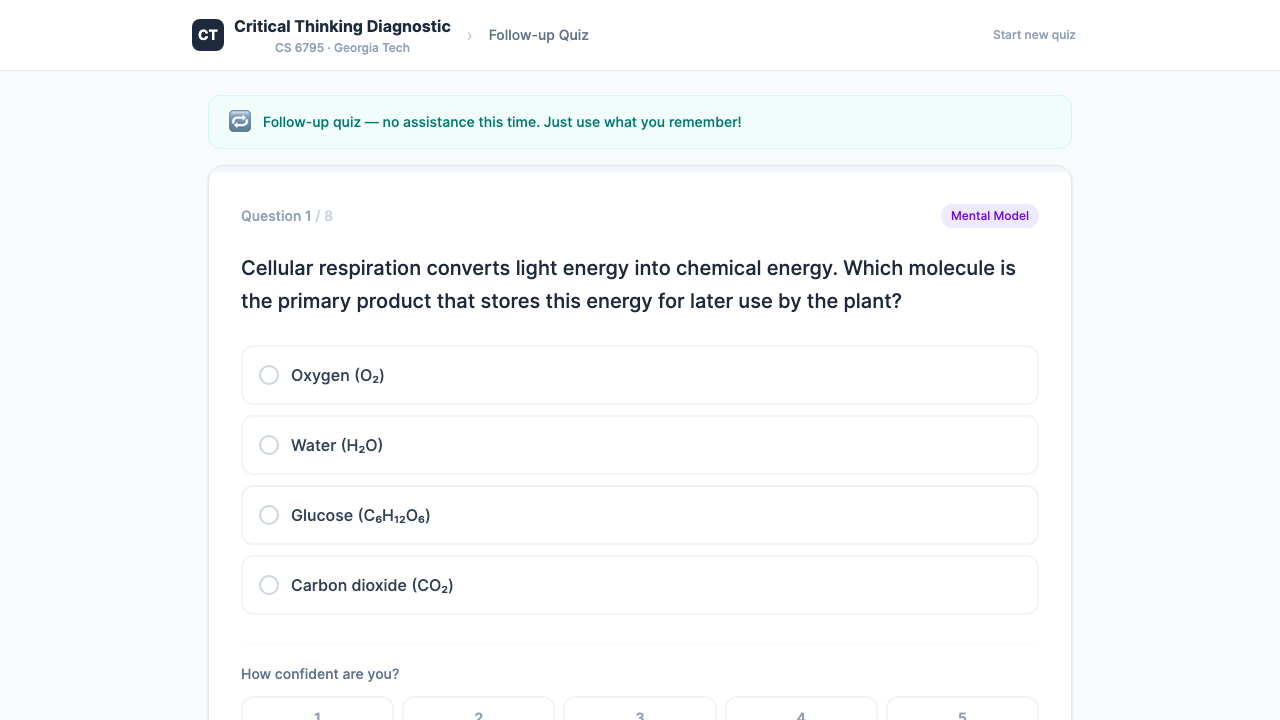

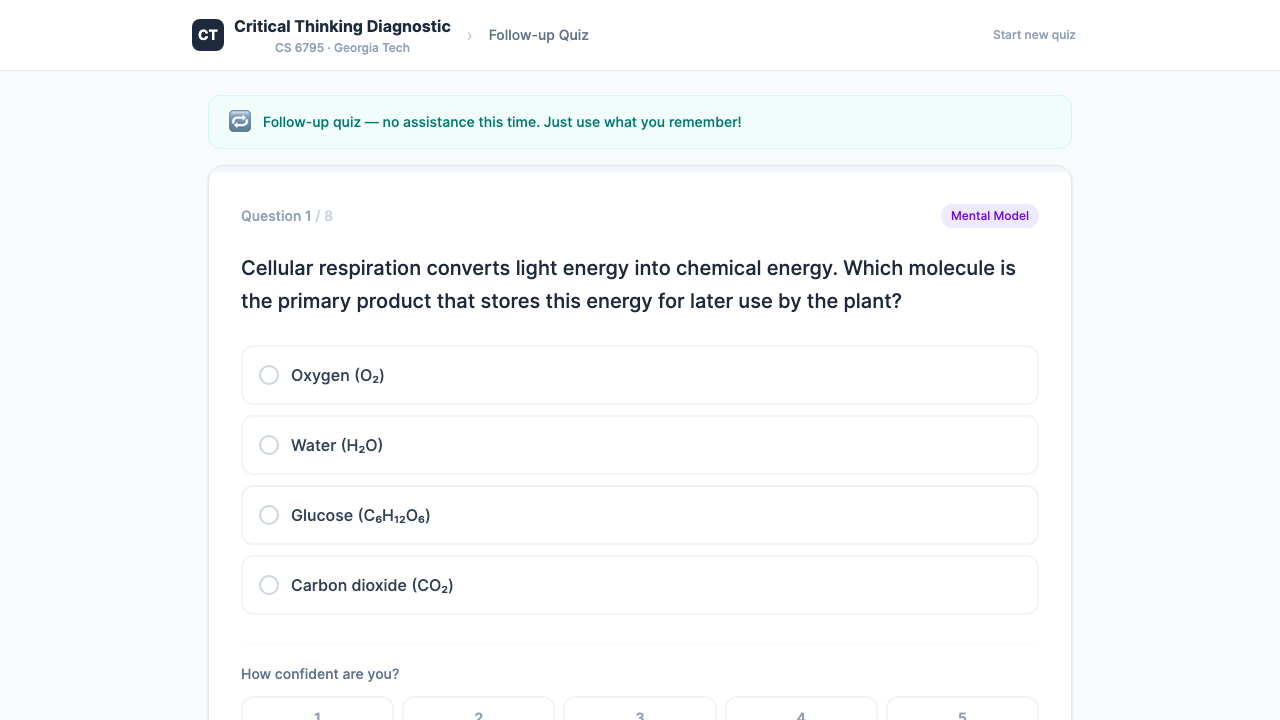

3. Transfer Quiz

Same structure, new surface features

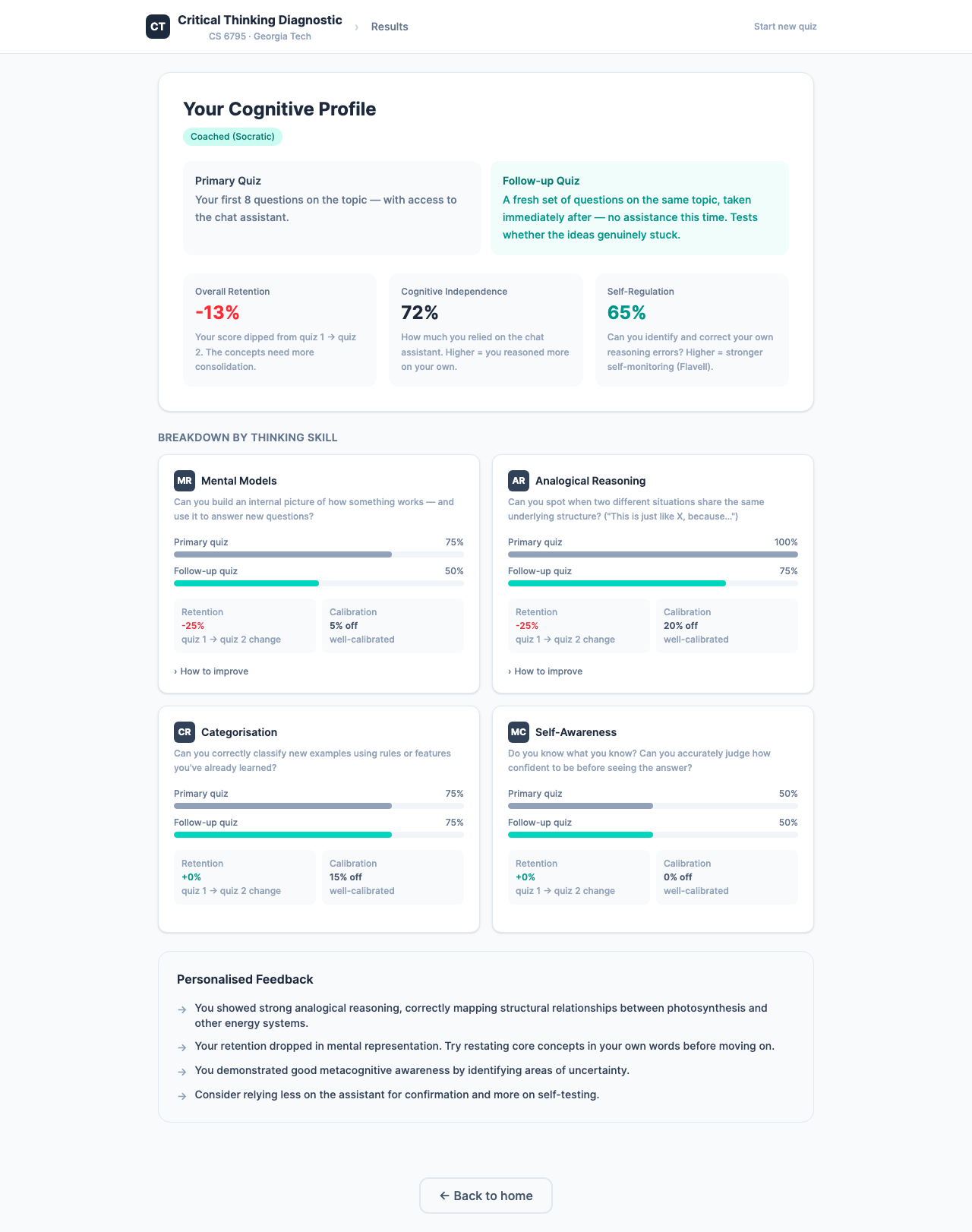

Diagnostic Profile

Four cognitive dimensions scored across primary and transfer quizzes.

Three Conditions

A — Control

No AI assistant

B — Socratic

Guiding questions only

C — Unrestricted

Full AI answers

Condition B: Socratic Coach

Condition C: Unrestricted AI

Three Measurement Channels

Chat Classifier → Hutchins

Scores every message for cognitive independence

Reflection Rubric → Flavell

Scores self-regulation between questions

Transfer Quiz → Gentner

Structure-mapped follow-up measures retention

Reflection interstitial (Flavell)

Transfer follow-up quiz (Gentner)

Results

60 sessions • 4 archetypes • 3 conditions • 5 runs

Four Learner Archetypes

Synthetic personas used to simulate 60 sessions (4 archetypes × 3 conditions × 5 runs).

Deep Learner

Reasons from first principles. High accuracy, well-calibrated confidence. Recognizes deep structure on transfer tasks. Rarely uses AI for answers.

Surface Memorizer

Relies on keyword matching. High confidence but poor calibration. Struggles when surface features change. Heavy AI usage in condition C.

Overconfident Guesser

Goes with gut instinct. Consistently overestimates understanding. Flat retention because no deep learning to lose. Ignores AI coaching.

Metacognitively Aware

Average knowledge but strong self-monitoring. Highly calibrated confidence. Maintains or improves on transfer. Uses AI strategically to fill gaps.

Retention by Condition

(pooled, 95% CI ±7pp)

(near baseline)

from deep learners (any condition)

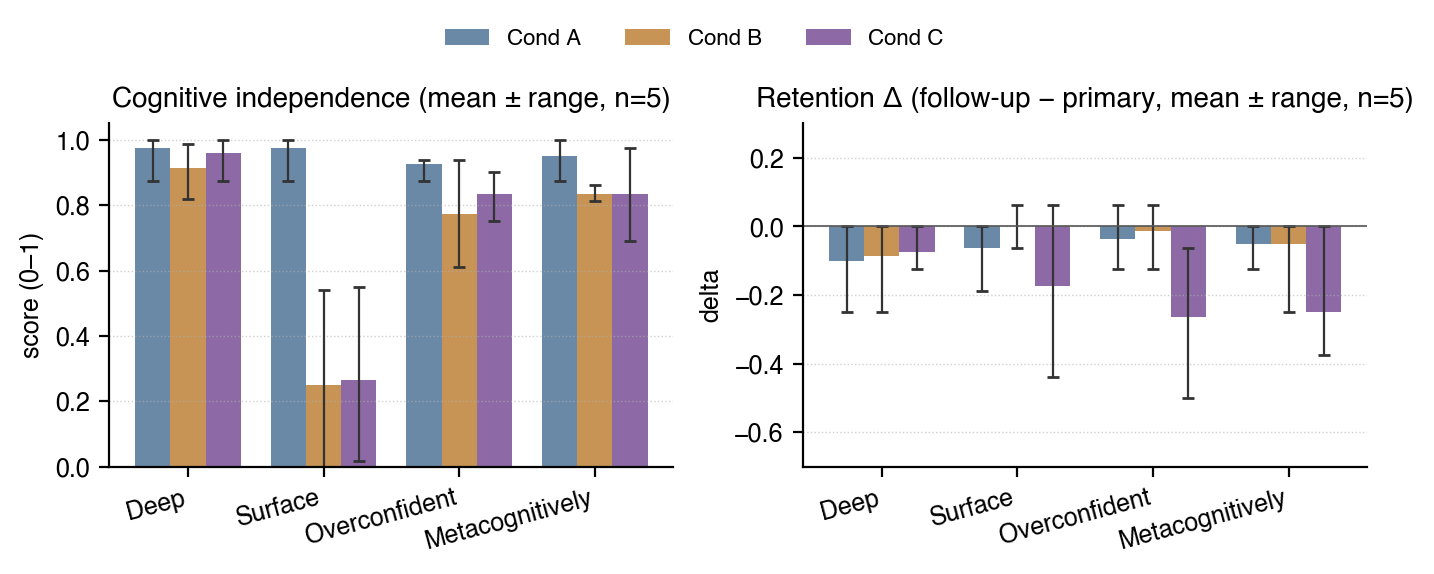

Cognitive Independence & Retention

Deep learners maintain independence and are buffered. Surface memorizers offload and lose the most.

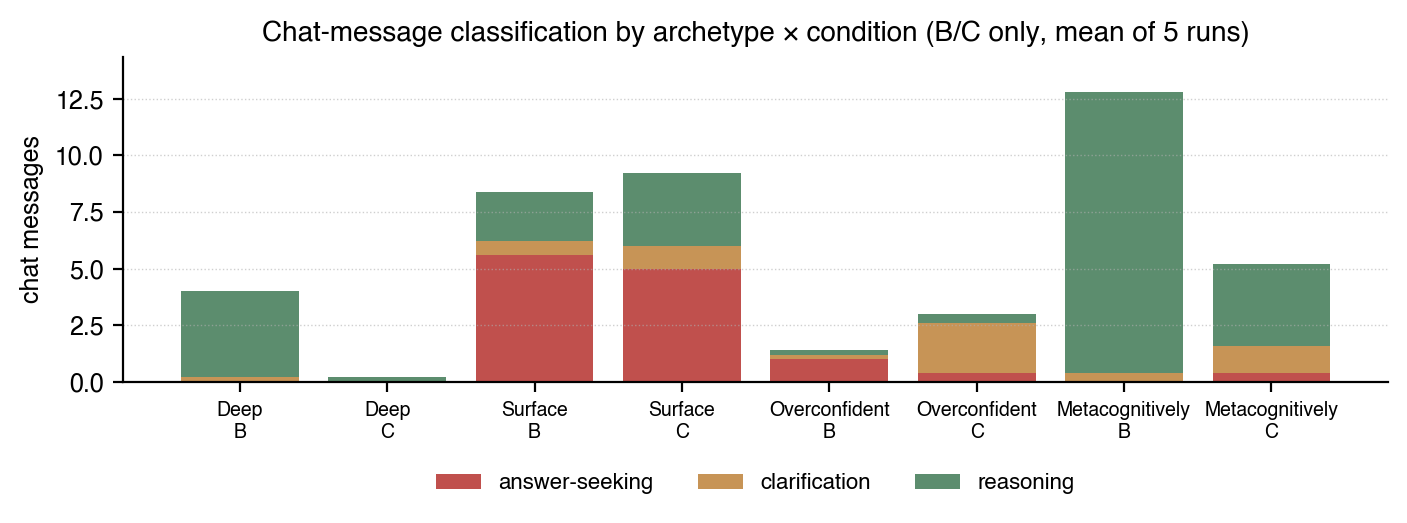

Chat Behavior by Learner Type

Surface memorizers send 5–6 answer-seeking messages; deep learners send zero.

External Validation

vs. CIMA human-coded corpus

ReflectSumm human labels

with GPT-5

Conclusion

What we learned and what it means

Key Takeaways

AI is not the enemy. Offloading is. Socratic coaching was as safe as no AI at all. The problem is handing over thinking before articulating it yourself.

Measure independence, not just performance. 100% with heavy AI help is not the same as 90% independently.

Use transfer tasks. A single quiz score doesn't reveal whether understanding is genuine.

Future Work

- Validation with real K-12 students

- Longitudinal tracking of diagnostic profiles

- Adaptive systems matching AI mode to learner needs

Thank you

Abhinav Kishore • akishore33@gatech.edu